WTH Are Agent Skills? The Missing Layer Between Prompts and Real Automation

AI agents can reason, but without structured actions they still guess at real-world workflows. This post explores the concept of AI agent skills and shows how LocalStack Skills provide deterministic, guardrailed automation for local AWS development.

Most AI agents these days are inching too close to the line of vibes for me.

You give them a prompt, some context, maybe a carefully crafted system message. They sound confident. The output looks right. But then you actually run what they gave you and something falls apart. A flag that does not exist. A command written for the wrong environment. A workflow that worked beautifully in the demo and nowhere else.

The model is usually not the problem. The architecture is.

For a while, we have been treating AI agents like very smart interns who just need better instructions. But what even the best intern needs, and what most agents still do not have, is a defined set of actions they are allowed to take. Known inputs. Expected outputs. Guardrails that make the behavior predictable.

That missing layer is agent skills. And once you see the gap, it is hard to unsee it.

Prompts help agents think. Skills help them act.

What Are Agent Skills?

At a practical level, a skill is a structured, machine-readable, reusable action that an AI agent can invoke deterministically.

Instead of the agent constructing behavior from scratch every time based on training data and best guesses, it calls a named action with defined parameters and gets back a predictable result.

The mental model I like is this: when you call a REST API, you are not hoping the server figures out what you meant. You send a structured request to a defined endpoint with documented behavior. Skills play a similar role for agent actions.

A well-designed skill defines:

- What it does: A clear, scoped capability.

- What it needs: Typed input parameters with constraints.

- What it returns: Structured output the agent can reason over.

- What it will not do: Boundaries that keep the agent from going off script.

Skills are contracts, not magic. And contracts are what can make automation more trustworthy.

Prompts vs Skills: A Real Comparison

Here is what the same task looks like with and without skills.

-

Task: Deploy an updated version of a service and verify that it is healthy.

-

Prompt-only agent: The agent writes deployment commands from memory, guesses at your environment configuration, skips a step because it was not explicit in the prompt, and returns “deployment complete” before the health check even runs. You only find out later that something was off.

-

Skill-enabled agent: The agent invokes a

deployskill with the service name and version. The skill handles environment-specific configuration, runs the health check as part of execution, and returns a structured result like:

{ "status": "healthy", "version": "2.3.1", "endpoint": "..." }Now the agent actually knows what happened and can make a correct next decision.

| Prompt-only Agent | Skill-enabled Agent | |

|---|---|---|

| Command generation | Guesses from training data | Invokes a defined action |

| Environment handling | Assumes and often breaks | Parameterized and portable |

| Output | Freeform text | Structured, parseable result |

| Reproducibility | Inconsistent | (Mostly) Deterministic |

| Auditability | Hard to trace | Clear inputs and outputs |

| CI/CD readiness | Risky | Built for automation |

Wait, Isn’t That What MCP Is For?

Before going deeper on skills, it’s worth addressing the thing that trips up most people who’ve been following the AI agent space: Anthropic has shipped two different standards for AI agents, and most people conflate them or don’t know the second one exists.

In November 2024, Anthropic released the Model Context Protocol (MCP), an open standard for connecting AI agents to external data sources and tools. Think of it as a universal adapter. Instead of writing a custom integration every time your agent needs to talk to GitHub, Slack, or a database, you implement MCP once and plug into a growing ecosystem of connectors. It moved fast. Thousands of servers, SDKs in every major language, and Anthropic has since donated it to the Linux Foundation as a vendor-neutral standard backed by Google, Microsoft, AWS, and others.

MCP solved the connectivity problem. But connectivity alone does not make agents reliable.

In October 2025, Anthropic launched Agent Skills, a different standard solving a different problem – not how agents connect to tools, but how they know what to do with them. Skills are organized folders of instructions, scripts, and resources that agents can discover and load dynamically. By December 2025, Anthropic published it as an open standard adopted by Microsoft, OpenAI, Atlassian, Figma, Cursor, and GitHub.

Here is how they actually differ:

| MCP | Agent Skills | |

|---|---|---|

| What it solves | Connecting agents to external systems | Giving agents domain expertise |

| The analogy | A universal API adapter | An onboarding guide for a new hire |

| What you build | A server with JSON-RPC tools and resources | A folder with a SKILL.md file |

| Infrastructure needed | Yes (server process, transport, auth) | None, just files |

| Complexity | High (protocol implementation, SDK setup) | Low, Markdown with YAML frontmatter |

| Best for | Tool connectivity at scale | Encoding workflows and domain knowledge |

MCP is the pipe. Skills are the knowledge that flows through it.

You might use both together: a skill that teaches an agent how to deploy infrastructure, backed by an MCP server that provides the connection to do it. But if you want to make your agent reliable at a specific workflow today, skills are the simpler starting point. No server to run. No protocol to implement. Just a folder and a Markdown file.

Why Skills Are Hard to Get Right (And Why Teams Skip Them)

If skills are so useful, why do most agents still not have them?

Honestly? Most people have never heard of them.

Anthropic introduced the Agent Skills standard in October 2025. Microsoft, OpenAI, Atlassian, Cursor, GitHub and others only adopted it as of January 2026. That is not very long ago. The ecosystem is still catching up, most platforms have not built skills yet, and the pattern has not had enough time to filter into the broader developer conversation the way MCP has. If this is the first time you are reading about skills, that is completely normal.

And even for teams who do know about them, good skills take real engineering discipline.

You have to scope them carefully – narrow enough to be reliable, but broad enough to be useful. You have to design the input and output contract. Think through failure modes. Decide what the agent is explicitly not allowed to do.

Most teams building with AI are moving fast. Prompts are fast. You can write one in five minutes and feel productive.

A well-designed skill takes more thought up front.

But the cost of skipping this layer shows up eventually. It shows up as sketchy automation you cannot quite explain. Agent behavior that differs between machines. The dreaded 2am incident that started with an agent confidently running…something.

The good news is the standard is simple. The investment is mostly in slowing down enough to think through what your agent actually needs to do reliably.

What Good Skills Look Like in Practice

When skills are designed well, they share a few important properties.

-

Narrow scope. A skill should do one thing well.

analyze-logsbeatsdo-devops-stuffevery time. The more focused the skill, the easier it is for the agent to use it correctly. -

Typed inputs. Freeform string parameters are usually a smell. Good skills define input types, required versus optional fields, and validation rules. This keeps garbage from quietly flowing downstream.

-

Structured outputs. If your skill returns a wall of text, the agent has to parse it. Sometimes it will parse it wrong. Structured data gives the agent something reliable to reason over.

-

Explicit failure modes. Skills should fail loudly and clearly. If something goes wrong, the agent needs to know what failed so it can decide what to do next.

-

Minimal side effects. A skill should do exactly what it says and nothing extra – no surprise state changes or hidden dependencies. Predictability is the whole point.

Skills in the Wild: LocalStack Skills

This is where things get concrete.

LocalStack Skills is an open source collection of structured skills for AI agents working with LocalStack, which emulates AWS services locally. If you want to build and test cloud workflows without constantly touching real infrastructure, LocalStack is already a familiar tool. LocalStack Skills is the agent-friendly layer on top.

What makes this a strong example is the intentional scoping. Agents are not given broad access to LocalStack. Instead, each capability is exposed through a clearly defined skill.

Highlights include:

- LocalStack Lifecycle handles start, stop, restart, and status checks. It sounds basic, but reliable environment control is the foundation everything else depends on.

- IaC Deployment supports Terraform, CDK, CloudFormation, and Pulumi. This is where the pattern really shines. Infrastructure deployment is exactly the kind of multi-step workflow where prompt-only agents tend to get wobbly.

- State Management uses Cloud Pods to save and load environment snapshots. This is what makes agent workflows reproducible instead of “works on my machine adjacent.”

- Logs Analysis gives agents a structured way to pull and analyze LocalStack logs instead of dumping raw output into the context window and hoping for clarity.

- IAM Policy Analyzer analyzes IAM policies and generates least-privilege suggestions. A nice example of combining structured execution with higher-level reasoning.

Here is what one of those skill definitions actually looks like. This is the localstack-lifecycle skill:

---name: localstack-lifecycledescription: > Use this skill to manage the LocalStack container lifecycle — starting, stopping, restarting, and checking status.---

# LocalStack Lifecycle Management

## Starting LocalStack

Use the LocalStack CLI to start the container:

localstack start -d

Wait for it to be ready before proceeding:

localstack wait -t 30

## Checking Status

localstack status services

## Stopping LocalStack

localstack stopThat is the whole thing. YAML frontmatter so the agent knows when to invoke it, and plain Markdown instructions for what to do. The agent loads the name and description at startup and reads the full file only when the task matches. No server. No SDK. No infrastructure. Just a file.

The architecture is intentionally simple:

Agent ↓Skills runtime ↓LocalStack ↓Local AWS services (emulated)The agent never gets raw shell access. Every action flows through a skill. That is the guardrail that makes higher autonomy possible without inviting chaos.

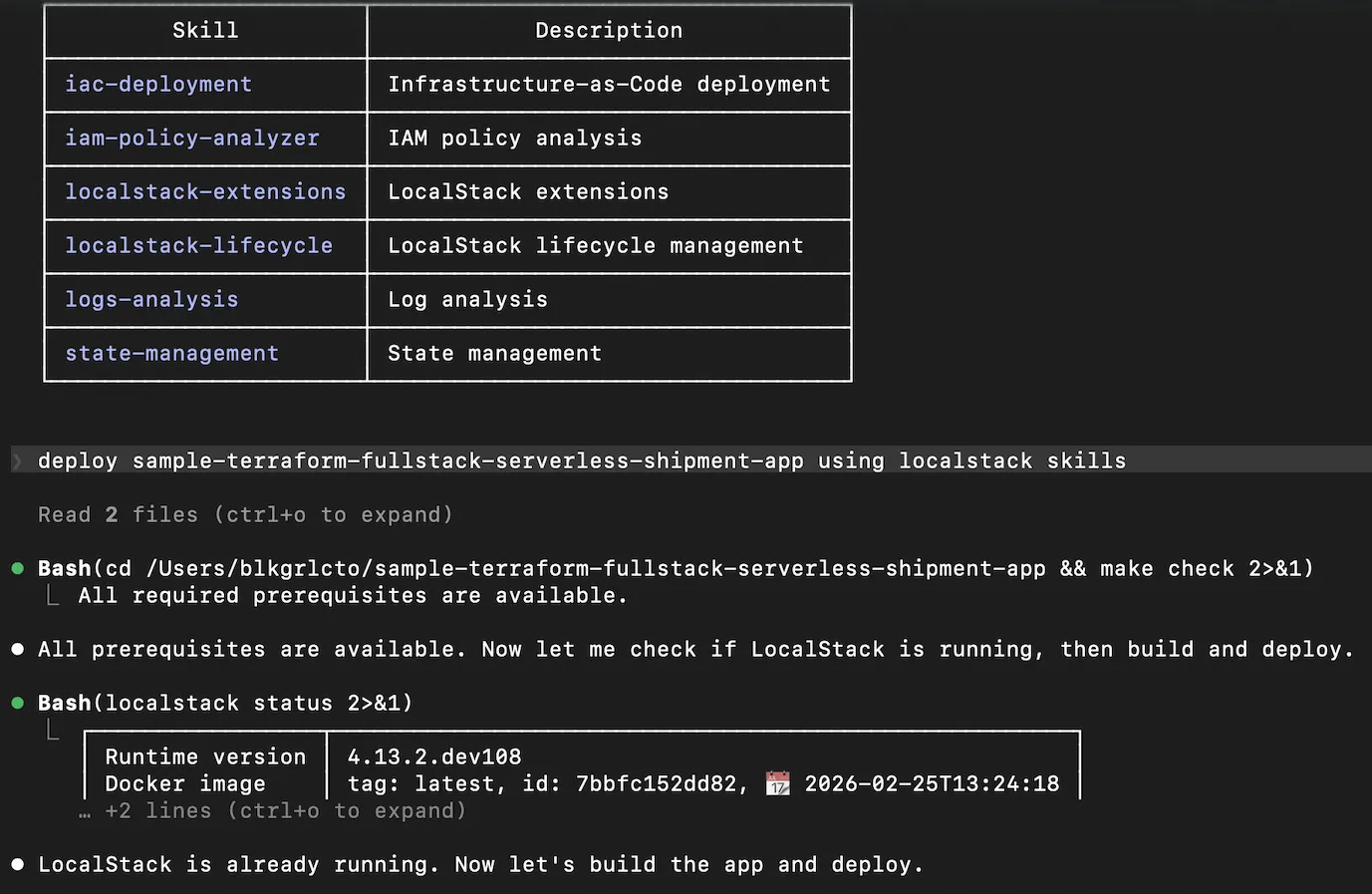

Here is what this looks like in practice with Claude Code and LocalStack Skills. Before the agent writes a single command, it reads the available skills — iac-deployment, localstack-lifecycle, logs-analysis, state-management, and others. That registry is the contract. The agent knows what actions exist, what they accept, and what they return before it does anything.

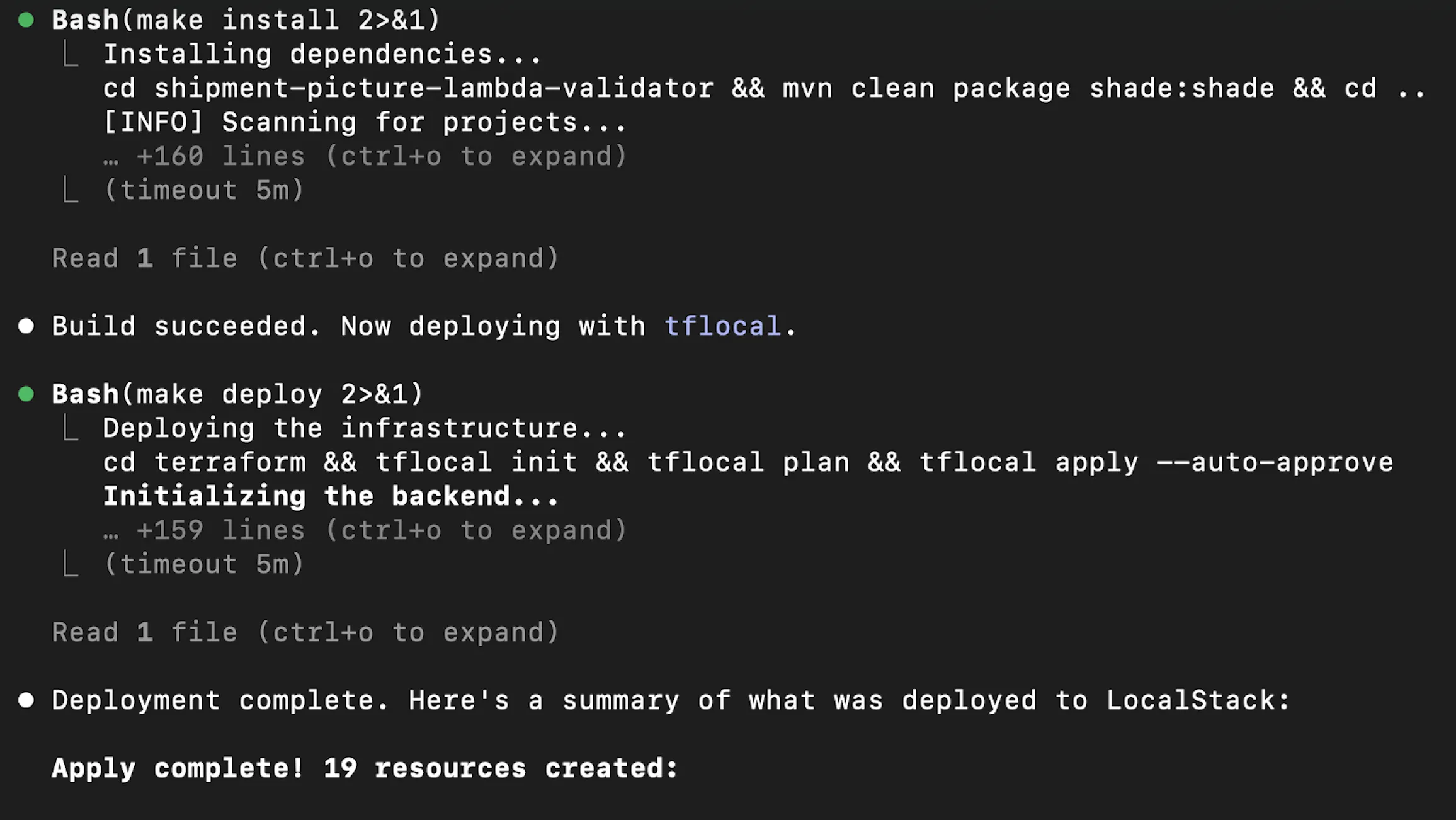

From there, the deployment unfolds in structured steps: prerequisites checked, LocalStack status confirmed, dependencies installed, Terraform applied via tflocal. Each step returns a result the agent can reason over. When the Terraform apply runs across S3, SQS, and SNS resources, the agent isn’t guessing — it’s reading structured output and deciding what comes next.

When Skills Actually Change the Game

Once skills are in place, the workflow starts to look very different. A typical flow might look like:

1. Agent checks environment status → Lifecycle returns { running: false }2. Agent starts LocalStack → Lifecycle returns { running: true }3. Agent loads known-good state → State Management restores snapshot4. Agent deploys updated service → IaC Deployment returns stack outputs5. Agent checks logs for errors → Logs Analysis returns { errors: 0 }6. Agent surfaces warnings to dev → structured, reviewable outputEvery step is defined. Every output is structured. You can replay it, audit it, and run it in CI without holding your breath.

That is when agent automation starts to feel production-adjacent instead of demo-adjacent.

The Bigger Picture

Developer tooling is steadily moving toward an agent-native world.

The real question is not whether your platform will interact with AI agents. It is whether your platform is ready for them.

Readiness does not mean adding an AI feature. It means exposing structured, machine-readable interfaces for the actions agents need to take. It means designing developer experience that works for both humans and the automated teammates that are increasingly part of the workflow.

LocalStack Skills is one concrete example of how teams are starting to do this well. It will not be the last. The pattern is going to show up more and more as agent workflows mature.